My First Encounter with AI: ELIZA

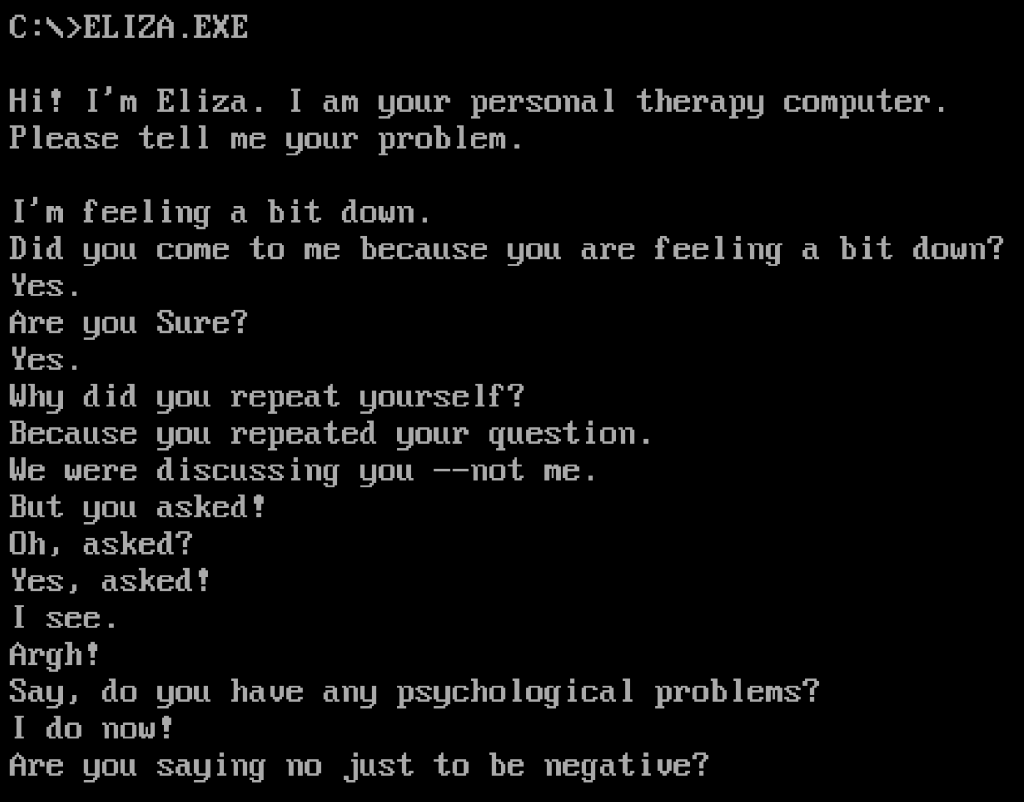

Sometime around 1982, I came across a BASIC port of the 1966 chatbot ELIZA. What first required a mainframe to run was now running in my bedroom on an 8-bit personal computer. It was simplistic and even frustrating at times (see photo), but it was also awe-inspiring as a first exposure to AI outside of science fiction.

This early rule-based Natural Language Processing example of AI inspired me and many others to pursue not just programming but machine learning. It’s also the namesake of the Eliza Effect—the tendency for people to unconsciously attribute human-like understanding and intelligence to computer programs, even when they only follow simple, scripted rules.

The Original ELIZA and Its Legacy

ELIZA was created in the mid-1960s at MIT by Joseph Weizenbaum. It ran on a mainframe and was written in MAD-SLIP, a language almost completely unfamiliar to most modern programmers. The most famous version was the DOCTOR script, which mimicked the responses of a non-directive psychotherapist. It would take a user’s input, perform simple pattern-matching, and return responses designed to encourage the user to continue the conversation. It didn’t understand anything, but it simulated understanding well enough to catch people off guard.

Weizenbaum was surprised—and eventually disturbed—by how people reacted to ELIZA. Secretaries at MIT would ask for time alone with the machine. He famously described how even his own secretary asked him to leave the room so she could privately converse with ELIZA. This unearned attribution of intelligence became a central concern for him, and later a cautionary theme in his book Computer Power and Human Reason.

The Eliza Effect: Misplaced Trust in Machines

The term “Eliza Effect” was later formalized and referenced in a 1996 paper by Batya Friedman and Peter Kahn. It describes a cognitive bias where users assume deeper understanding or human-like reasoning in a program that’s only performing surface-level operations. This isn’t just a footnote in history—it’s still a relevant concept, especially with the rise of modern chatbots and large language models that generate far more convincing output than ELIZA ever could.

The Eliza Effect is especially important when thinking about how users interact with AI in healthcare, education, and customer service. When someone assumes that an AI “knows” or “understands” because it sounds fluent, it can lead to misplaced trust, missed errors, and even emotional attachment. This concept laid early groundwork for today’s concerns about anthropomorphizing AI systems and the ethical implications that follow.

The ELIZA Archeology Project: Reconstructing the Past

For years, much of the original ELIZA source code—especially the MAD-SLIP version—was presumed lost or incomplete. What we had were ports, rewrites, and second-hand documentation. That changed with the emergence of the ELIZA Archeology Project, which has done significant work to recover, verify, and preserve the historical record of ELIZA and its variants.

The project, led by a small team of software historians and hobbyists, has unearthed original source files, transcripts, academic papers, and even artifacts like punch cards and terminal printouts. They’ve reassembled and annotated the code, provided translations into more modern languages, and tracked down original system behaviors that were often lost in the simplified ports. This isn’t just nostalgia—it’s a valuable look into how early AI systems were constructed, debugged, and conceptualized.

There’s a browser-based version available on the project’s site where you can run ELIZA as it originally appeared, not just as the BASIC clone many of us knew in the early personal computing days. The site also includes detailed documentation and analysis of ELIZA’s internal logic and scripting structure, which offers insight into how such a simple engine could generate such outsized reactions.

What ELIZA Inspired

ELIZA didn’t just inspire users to pursue programming—it inspired new branches of computing. It marked one of the first moments when people saw the potential of human-computer dialogue. It fed into early interest in NLP, human-computer interaction, and eventually conversational interfaces. Even when the limitations were obvious, the idea that a machine could “talk back” opened doors.

It also created a long-lasting framework for chatbot design. Many chatbot architectures even into the 1990s and early 2000s followed ELIZA’s script-based approach, with pattern-matching engines and substitution-based outputs. Only with recent advances in neural networks and deep learning have we moved decisively away from those roots, and even now, many commercial chatbots blend rule-based elements with statistical models to balance reliability and flexibility.

The psychological and cultural footprint of ELIZA was also significant. It showed that machines didn’t need real intelligence to evoke emotional responses. That realization continues to shape conversations about the ethics of AI design, the responsibility of developers, and the boundaries of machine interaction.

Reflecting on the Present

Trying ELIZA again today is a strange experience. It’s primitive and transparent, but still oddly familiar. The gaps between input and response are obvious, but so is the intent behind it: to explore the possibility of conversation with a machine, no matter how limited. For anyone who saw it early on, it recalls a time when the idea itself was novel enough to spark imagination.

Compared to today’s systems, it barely qualifies as a prototype. But it still matters. Not because of what it could do, but because of what it made people believe was possible.

Leave a comment