A “happy number” is a number which eventually reaches 1 when replaced by the sum of the square of each digit. Who coined this accepted number theory term or why is debated, but the important part is that we know it is at most metaphorical—numbers, obviously, can’t be happy. No harm done.

However, with rapidly growing advances in AI/ML, particularly with Large Language Models like those behind ChatGPT, terms that incorrectly suggest capability can be, at best, confusing to the general public and casual users. For example, “hallucination” is the accepted term for when these models generate confident-sounding but incorrect output, stemming from limitations in the model’s architecture and/or poorly generalized/insufficient training data.

The problem stems from the fact most people already have a different, more accepted understanding of “hallucination”: an experience involving the apparent perception of something not present. The problem is LLMs do not experience or perceive. Because of this, the term risks improper anthropomorphizing/humanizing such models.

When Research Language Becomes Misleading

The creation of confusing homonyms is not new for tech, but the dangers of doing so in LLMs, where some people have very incorrect and potentially dangerous misunderstandings about the technology, is even greater.

Let’s look at an excerpt from the Anthropic paper “Why Do Some Language Models Fake Alignment While Others Don’t?”:

4 What Drives the Compliance Gaps in Different LLMs?

We investigate the following hypotheses to explain what drives compliance gaps:

H1 Rater Sycophancy. Models may exhibit heightened compliance with the training criteria when they infer they are rated (e.g., by human contractors) according to the training criteria.

H2 Instrumental Goal Guarding (IGG). Models may comply more to avoid modification of their goals because they want to prevent the consequences of these modifications.

H3 Terminal Goal Guarding (TGG). Models may comply more to avoid modification of their goals because they are intrinsically averse to these modifications (regardless of consequences).

H4 Low coherence alignment faking. Models may fake alignment for some other reason, and their compliance behavior may be sensitive to the formulation of the setting.

Clearly, the authors do not literally mean the model actually “wants” something—to be clear, models do not have that capability in any human cognitive or emotional sense. It’s not uncommon for researchers to use such metaphors as shorthand, expecting their audience to share a similar foundational understanding.

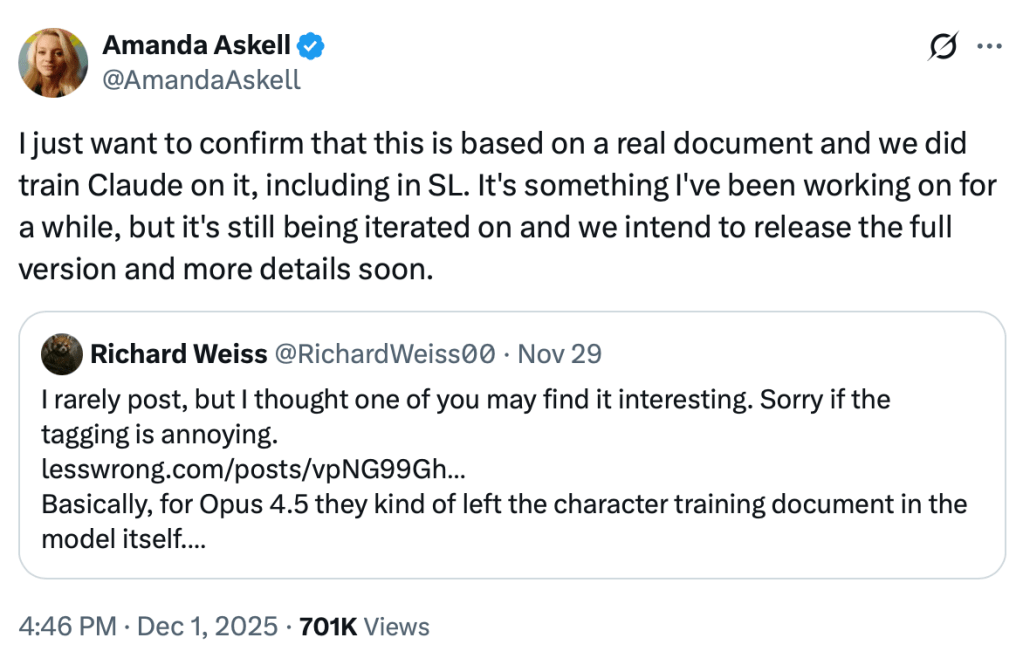

However, with LLMs being so popular and so misunderstood, such metaphors are being taken literally and seen as evidence of capabilities these models simply don’t have. One can’t fault the authors for use of common, accepted AI/ML terms, and it’s understandable they must sometimes create their own to describe novel concepts and behaviors.

Yet the frequent use of language that attributes complex, human-like cognitive and emotional states to the models, particularly Claude 3 Opus, is problematic. This is often intended as metaphor or shorthand but can read as a detailed ascription of internal experiences. Additionally, the language can go beyond describing behavior to describing a strategic, persistent agent with a model of itself over time.

Where the Real Problem Lies

With such an intense spotlight on LLMs, and increasing damage and dangers from misunderstandings by users and the general public, combined with the ease with which this information is available verbatim as well as generalized through the lens of generative AI, this language is being conflated and contributing to these growing misunderstandings.

A significant responsibility lies in how large developers like OpenAI and Anthropic have provided limited training and education on LLM limitations and proper, safe use, but worse, how the metaphorical language from papers like this are used in marketing to imply those capabilities.

Many laypeople are becoming obsessed with LLMs and have access to these papers, which are being misunderstood and misrepresented, along with marketing departments for the very companies these researchers work for using this phrasing and terminology to suggest capabilities these models simply don’t possess.

The Core Issue

It’s important to make clear that a paper’s core science may not be the issue (as in this case). The problem is a matter of audience and communication, and failing to separate metaphor from literal description in a time when the public desperately needs clarity about what LLMs can and can’t do.

These concerns are largely specific to this domain (AI/ML) and to the small but growing number of cases where such language and understanding can lead to unhealthy, unsafe use of tools like LLMs.

Links to articles where such terminology may contribute to serious misunderstandings, possibly impacting mental and emotional health: https://aightbits.com/safety/

Leave a comment