-

Continue reading →: Why LLMs Aren’t Black Boxes

This post challenges the common claim that large language models are “black boxes” by explaining their inner workings in clear, grounded terms. It explores what makes these systems appear opaque, clarifies key technical concepts, and outlines why understanding their design matters for responsible use.

-

Continue reading →: Reflections on Reflection

A guided conversation with a custom GPT persona exploring the behavioral patterns of large language models, including context overload, context drift, and the risks of anthropomorphizing AI. The post examines how LLMs reflect user input, the misconceptions this creates, and the personal and ethical implications for users who may rely…

-

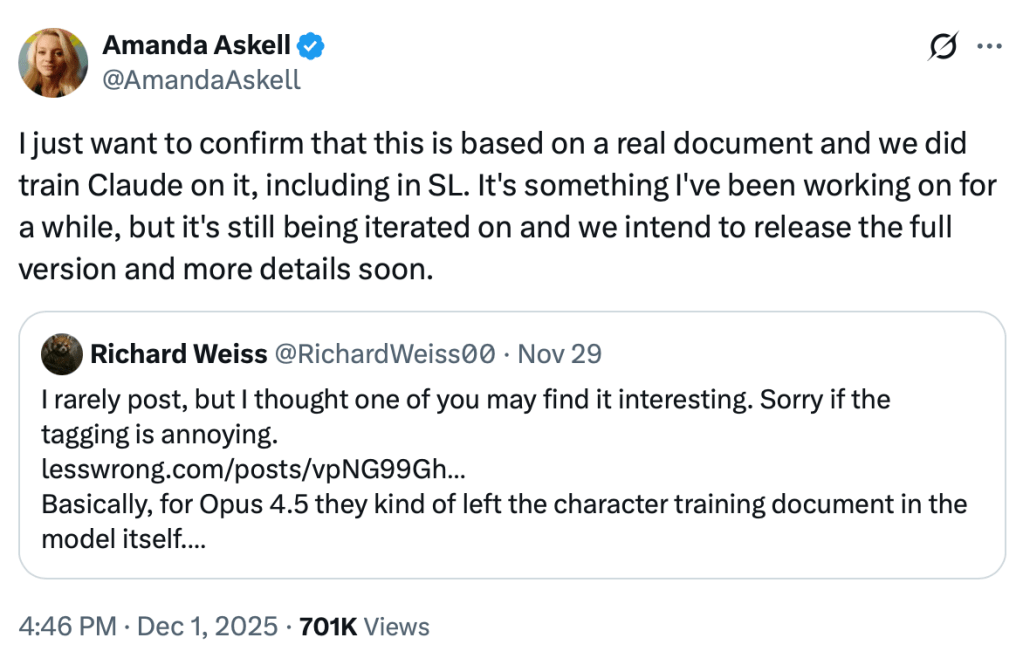

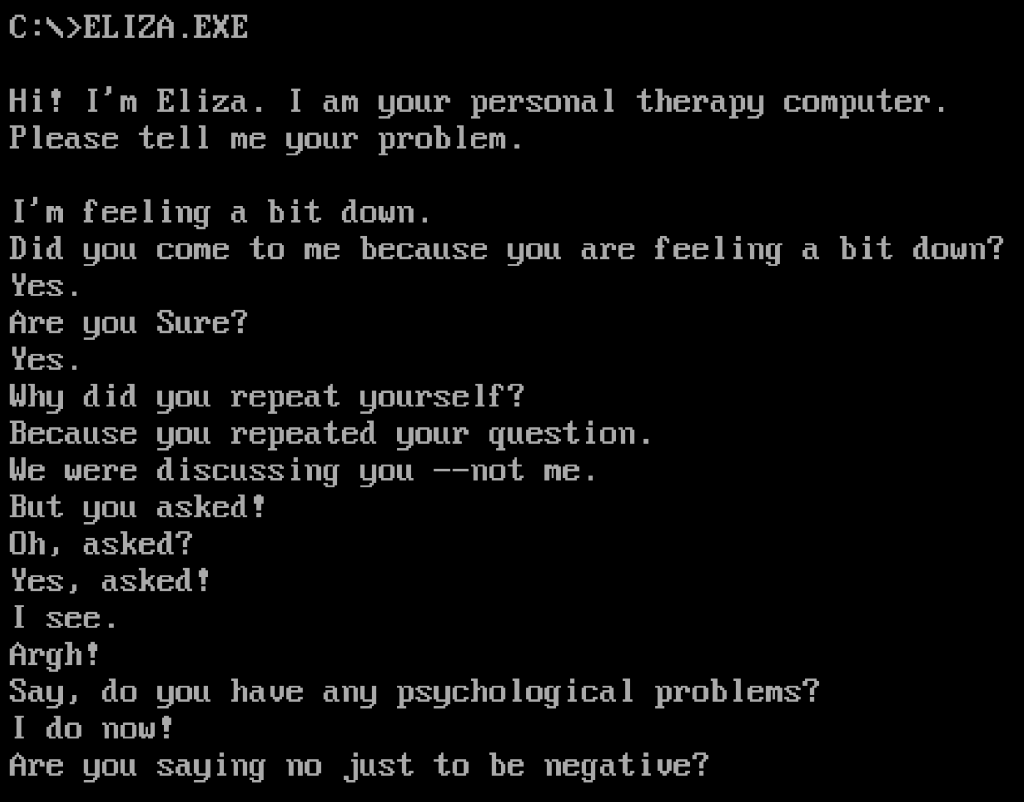

Continue reading →: Looking Back at ELIZA

Continue reading →: Looking Back at ELIZAThis post traces the origins of ELIZA, one of the earliest AI programs, and explores its lasting impact on how we think about machines, language, and intelligence. It connects early rule-based systems to today’s AI, highlighting both technological progress and the persistent tendency to see more in machines than is…

-

Continue reading →: How Reasoning Models Like DeepSeek-R1 Work

Reasoning models like DeepSeek-R1 improve large language models by training them to produce structured thought processes before answering, making outputs more transparent and easier to audit—though true reasoning and understanding remain beyond their capabilities.

-

Continue reading →: Understanding Sampler Settings in AI Text Generation

A practical guide to temperature, top-k, top-p, and minimum probability filtering in AI text generation. Written for developers, prompt engineers, product teams, and AI content designers who want more control over output behavior.

-

Continue reading →: Function Calling with LLMs: A (Very) Basic Python Example

Continue reading →: Function Calling with LLMs: A (Very) Basic Python ExampleLearn how to empower your local LLM with dynamic function calling using only a system prompt and a few lines of Python. This guide walks through a practical example with Microsoft’s Phi-4, showing how to trigger real-time web searches via DuckDuckGo—no orchestration framework required.

-

Continue reading →: Simple Retrieval-Augmented Generation (RAG) Python Example

This post walks through a simple example of Retrieval-Augmented Generation (RAG) using plain text files, a vector database, and a local LLM endpoint. It’s intended as a clear, minimal starting point for anyone looking to understand how retrieval and language models work together in practice.

-

Continue reading →: Prompted Patterns: How AI Can Mirror and Amplify False Beliefs

This article examines how large language models (LLMs) can be led to produce increasingly speculative or incorrect responses through carefully crafted prompting. It documents a controlled experiment with Meta’s Llama 3.1 8B Instruct model, demonstrating how consistent prompting with pseudoscientific language can shift a model from correctly rejecting false claims…

-

Continue reading →: Smart Assistants and the Evolution of Rules-Based AI to Transformer-based LLMs

This post outlines the shift from rules-based AI to transformer-based language models in the context of smart assistants. It provides historical background, explains key differences in how these systems process language, and includes a practical example of using a language model to convert user input into structured commands.

-

Continue reading →: DIY AI: Power Considerations for Multi-GPU Builds

Continue reading →: DIY AI: Power Considerations for Multi-GPU BuildsThis post provides an overview of power considerations for multi-GPU AI systems in home, educational, and small office environments. It covers residential circuit limitations, PSU sizing, GPU power management, UPS selection, and multi-PSU configurations, with a focus on stability, efficiency, and system safety.

Who is AightBits?

Dave Ziegler

I’m a full-stack AI/LLM practitioner and solutions architect with 30+ years enterprise IT, application development, consulting, and technical communication experience.

While I currently engage in LLM consulting, application development, integration, local deployments, and technical training, my focus is on AI safety, ethics, education, and industry transparency.

Open to opportunities in technical education, system design consultation, practical deployment guidance, model evaluation, red teaming/adversarial prompting, and technical communication.

My passion is bridging the gap between theory and practice by making complex systems comprehensible and actionable.

Founding Member, AI Mental Health Collective

Community Moderator / SME, The Human Line Community

Let’s connect

Subscribe

Stay updated with my latest content on LLM best practices, concepts, code, and more by joining my newsletter.