-

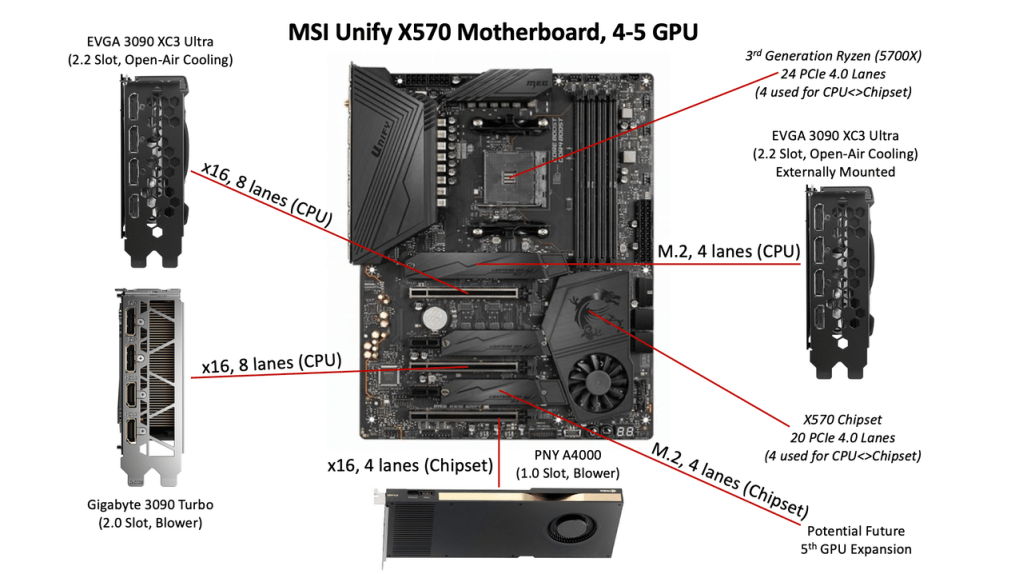

Continue reading →: DIY AI: PCIe Considerations for Multi-GPU Builds

Continue reading →: DIY AI: PCIe Considerations for Multi-GPU BuildsThis post provides a detailed overview of PCIe considerations for multi-GPU system design, with a focus on small-scale AI training and inference workloads. It covers PCIe bandwidth by generation, CPU vs. chipset lane allocation, and real-world motherboard layout examples. Additional topics include slot behavior, M.2 GPU expansion options, and practical…

-

Continue reading →: User-Induced Bias in Language Models: A Simple Demonstration

Large Language Models (LLMs) like ChatGPT are powerful language tools and excellent mimics. Because of this mimicry, they can inadvertently create echo chambers of misinformation by mirroring not only our incorrect beliefs but also our tone—amplifying confirmation bias over time. I thought it was a good time to repost this…

-

Continue reading →: Sparse Mixture of Experts (SMoE) Overview

This post explains how sparse Mixture of Experts (sMoE) models like DeepSeek-V3 and Mixtral work, focusing on how routing, expert specialization, and parameter activation contribute to their efficiency. It addresses common misconceptions—particularly around training, inference behavior, and hardware requirements—and clarifies what these models are (and aren’t) optimized for.

-

Continue reading →: Simple AI Agent Python Example

Yesterday, I shared a high-level overview of agentified AI. Today, let’s look at a simple but practical example. As a quick reminder, an AI agent, at its core, is a system that: In many ways, this is standard automation. What’s changed is that large language models (LLMs) make certain types…

-

Continue reading →: AI Agents Under the Hood

Agentic AI systems may look intelligent and autonomous, but it’s the surrounding software — not the language model itself — that creates that illusion. This post breaks down how large language models remain static and stateless, and how function calling, scheduling, and orchestration at the application layer give rise to…

-

Continue reading →: Introduction to Named Entity Recognition (NER)

Named Entity Recognition (NER) is the process of extracting structured data—like names, emails, and addresses—from unstructured text. This post walks through a practical example using a local language model to transform messy, real-world input into clean, structured output. While the technique works just as well with hosted models, it highlights…

-

Continue reading →: Introduction to In-Context Learning

Large language models can generate impressive results, but they don’t learn or evolve after training. In-context learning offers a powerful workaround—letting us guide a model’s behavior using carefully designed prompts and examples, without the need for retraining. This post breaks down the core techniques behind in-context learning, from zero-shot to…

-

Continue reading →: Parameters & Context: Tool & Material, Not Long-Term & Short-Term Memory

Many people think of a language model’s parameters as “long-term memory” and user prompts as “short-term memory”—but that analogy falls short. This post breaks down the real roles of parameters, context, activations, and output in Large Language Models, explaining why it’s more accurate to think of the model as a…

-

Continue reading →: ChatGPT 4.5 Passes the Turing Test ‐ But What Does That Really Mean?

A closer look at a recent study claiming GPT-4.5 passed a rigorous Turing Test. Explains the experiment’s design, how it differs from past Turing Tests, and why passing it doesn’t indicate true intelligence or understanding. Includes historical context, key findings, and clarification of common misconceptions about what the Turing Test…

-

Continue reading →: Introduction to Chain of Thought (CoT) Prompting

This post explains the concept of Chain of Thought (CoT) prompting, a technique for guiding language models to produce more structured and logically coherent responses. It outlines how CoT differs from standard prompting, provides example prompts for both conceptual explanations and logic puzzles, and highlights how added structure can influence…

Who is AightBits?

Dave Ziegler

I’m a full-stack AI/LLM practitioner and solutions architect with 30+ years enterprise IT, application development, consulting, and technical communication experience.

While I currently engage in LLM consulting, application development, integration, local deployments, and technical training, my focus is on AI safety, ethics, education, and industry transparency.

Open to opportunities in technical education, system design consultation, practical deployment guidance, model evaluation, red teaming/adversarial prompting, and technical communication.

My passion is bridging the gap between theory and practice by making complex systems comprehensible and actionable.

Founding Member, AI Mental Health Collective

Community Moderator / SME, The Human Line Community

Let’s connect

Subscribe

Stay updated with my latest content on LLM best practices, concepts, code, and more by joining my newsletter.